WebGL, blending, and why you're probably doing it wrong.

Premultiplied alpha.

If you’ve dabbled in graphics at all, you’ve probably heard this term. You might even know what is, but do you know when to use it and when not to? Are you using it by default? Do you know why browsers default to premultiplied blending of WebGL canvases? Do you know why artists prefer to work with images that are not premultiplied?

Eric Haines wrote a great piece recently about why graphics programmers need to understand premultiplied alpha to avoid the all too common fringing problems. If you read that and already believe him or have already switched, you can skip my two cents. If you haven’t read it, I’ll wait.

What we're going to talk about here is a different reason why understanding premultiplied alpha is important, and why premultiplied alpha is more important with WebGL than it was with OpenGL.

Ten Dollar Words

I was confused by premultiplied alpha for a long time. I technically knew what “pre-multiply” meant, but I didn’t have a strong grasp on what it really was and when to use it. I don’t know if was lazy or intimidated or embarrassed to admit I didn’t really understand, but I’ve realized I’m not alone.

There’s a ton of confusion about alpha even though it’s a really simple concept. For one thing, I feel like the terminology stinks. To borrow a phrase from “True Detective”, the ten-dollar words we have to describe alpha and blending are actually making the concepts more intimidating and harder to understand than they could be. Premultiplied, Un-premultiplied, Straight, Unassociated... Come on! What do these even mean? (Rhetorical question, please don’t explain it to me.) Why are we stuck with such opaque words? It’s not even the alpha that gets multiplied or associated, it’s the color. Anyway...

I don’t have a better proposal, but I’ve been thinking about the words ‘raw’ and ‘cooked’, where premultiplied is cooked. It makes a decent analogy since cooking raw things is safer; you already know which one you ought to consume, rendering and compositing count as cooking as we’ll see in a minute, and you can’t very well un-cook things once they’re cooked.

But I digress.

The Two Kinds of Transparent Images

As you may know, there are two common kinds of transparent images, and they both get rendered differently. When you put a transparent image over a background (aka “compositing”), you have to use a specific blending operation depending on which kind of transparent colors you’re working with.

Aside from the terminology, one reason all this alpha business is confusing is because premultiplied images are the more natural way to think about images, but straight-alpha (aka unpremultiplied, aka unassociated) blending is the more natural way to think about blending.

Premultiplied images are more natural images

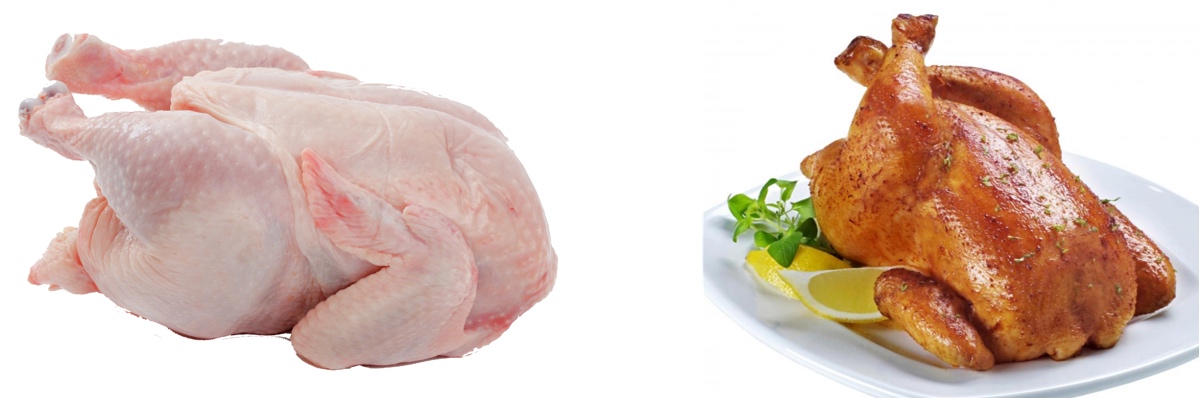

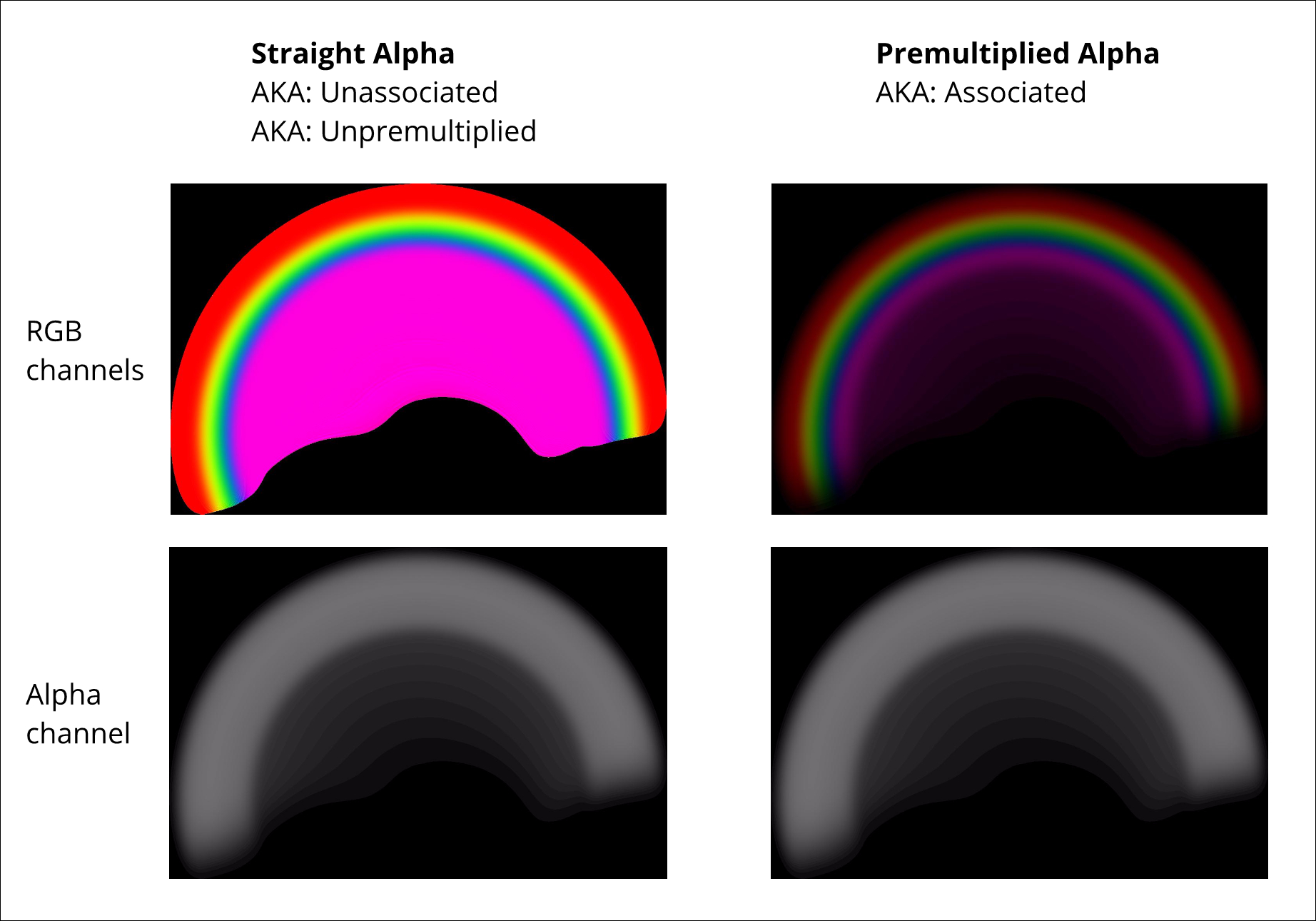

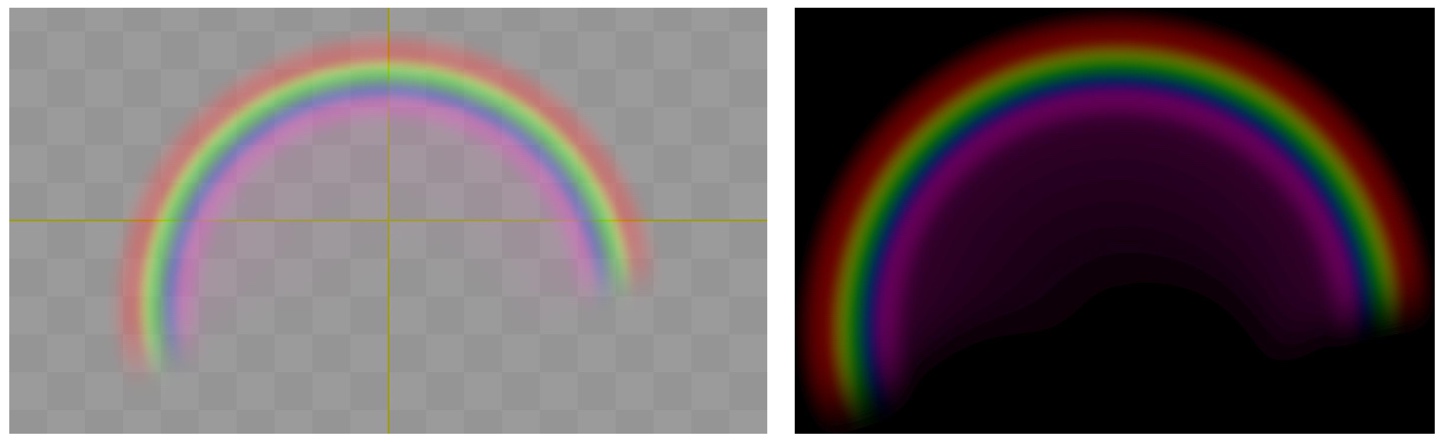

When you think about a transparent image, do you imagine something like this:

Or something like this?

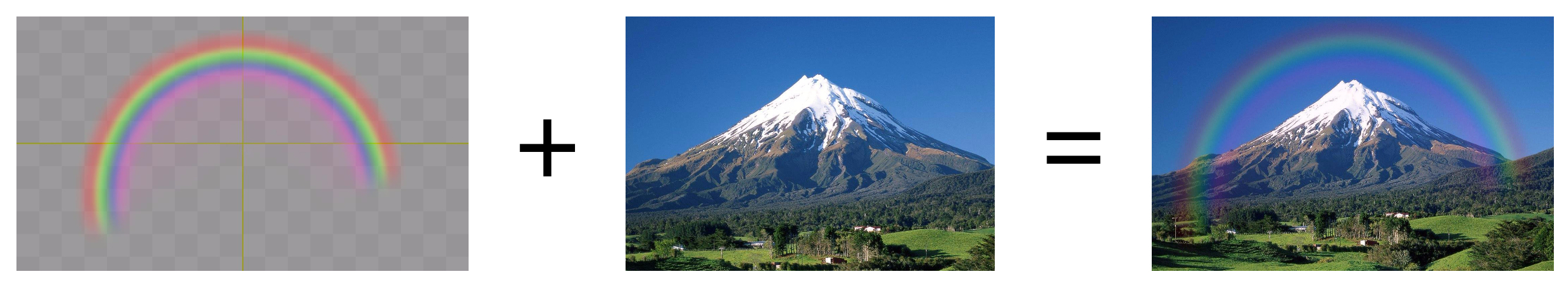

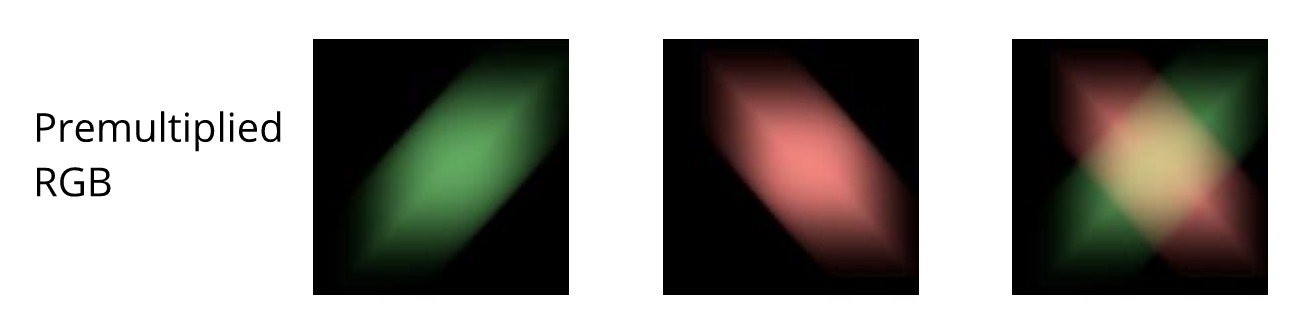

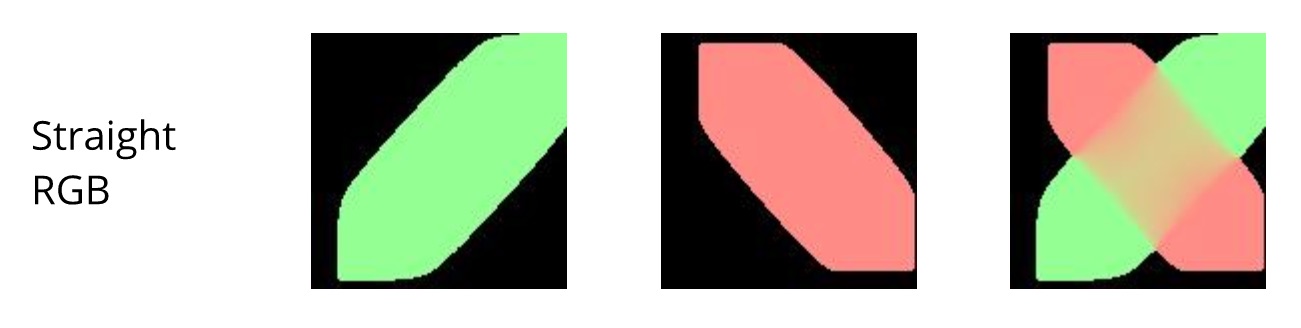

Straight alpha colors are actually really weird, when you start to think about what they look like. What color is a transparent pixel without the transparency? We don’t naturally think about color that way, and strange things start to happen when you do. Consider motion blur for a minute:

Okay, nice. That looks like what I expect.

Blending always produces premultiplied images

There is a much bigger and more important reason why premultiplied is the natural state of an image: the result of the blending equation is premultiplied colors… regardless of whether the inputs are premultiplied or not.

Let that sink in.

Premultiplied colors are what your compositor or renderer spits out, even if you used straight-alpha images with straight-alpha blending. In order to get a straight-alpha image out, you have to add an extra step to UN-pre-multiply, or divide by alpha. And pre-multiplying is bad enough, nobody wants to post-divide.

What happens when you want to use your rendered result as the source for something else? Unless you convert your rendered output back to straight alpha, your second step gets a premultiplied image input.

Straight alpha is the only choice for painting

Straight alpha is the only thing that makes any sense if you have to paint in the alpha channel by hand. Artists can’t work with premultiplied images at all, they need independent control over the colors & masks. Think about what it would look like if you tried to paint colors in premultiplied images. Think about what it would take to write the code for a tool like that. The opacity information in a premultiplied image is both in the alpha channel and baked into the color channels, so painting the mask in a premultiplied image would mean continuously un-multiplying and re-multiplying the color channel.

Plus, every time you convert premultiplied to straight alpha, you divide, and you lose precision in proportion to how transparent your colors are. Do it a few times, and you’ll destroy your mask completely.

Straight alpha blending is more natural blending

With straight alpha, your blending function is symmetric. A*x + B*(1-x). We’re used to that math, and it just feels right, it makes sense. Premultiplied blending, A + B*(1-x), looks strange, doesn’t it?

Tutorials on transparency usually start with straight-alpha blending. The OpenGL FAQ on transparency says to use straight alpha blending. That’s just the way most of us got introduced to how blending works.

Many people learn to copy & paste this function call to get transparent blending, which assumes straight-alpha colors:

gl.blendFunc(gl.SRC_ALPHA, gl.ONE_MINUS_SRC_ALPHA);

Did you know that blending function is wrong if your result is transparent? It doesn’t calculate the resulting alpha channel correctly. I didn’t know this because I hadn’t thought about it, and I was really confused when I first bumped into it. But the answer is simple. We don’t want to interpolate alpha. We don’t want a mid-point or a weighted average of the alpha channels. Transparent things in real life don’t blend. The colors blend, but the opacity gets monotonically more opaque as things pile up -- stack two transparent gels and the result is more opaque than either one individually. It’s easy to see that when you interpolate two different alpha values, the result is less opaque than one of them, and that’s just the wrong thing to do.

The correct blending function with straight-alpha input colors and transparent output colors is:

gl.blendFuncSeparate(gl.SRC_ALPHA, gl.ONE_MINUS_SRC_ALPHA, gl.ONE, gl.ONE_MINUS_SRC_ALPHA);

Or, you can blend premultiplied colors, and it all gets easier:

gl.blendFunc(gl.ONE, gl.ONE_MINUS_SRC_ALPHA);

WebGL canvases are always composited!

A WebGL canvas is just another (potentially transparent) element on a page. It is always composited over the page by your browser. The browser defaults to compositing a WebGL canvas using premultiplied alpha, because like we talked about above, your colors come out of the WebGL renderer in premultiplied form. Unless you’re consciously preventing it, when you call glReadPixels(), the image you get back is a premultiplied image.

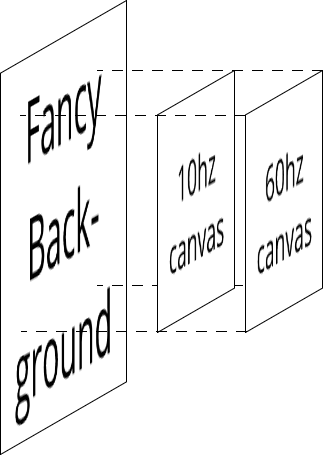

This hit me in the face a couple of years ago when I stacked two WebGL canvases on top of each other and started rendering transparent things in both buffers over an HTML background. I was trying to use the straight-alpha blending I knew, and it didn’t look right or do what I expected.

There are some cool things you can do when you stack canvases on top of each other. For one thing, you can render your separate buffers at different frame rates -- for example, a background that’s slow to render but not moving fast can be done incrementally in the bottom layer, while you have a high frame rate update in the interactive upper layer. You can use HTML instead of WebGL to load fancy backgrounds, since it’s easier to render images with HTML than to draw textures in WebGL.

With OpenGL, only the people that learned how to do advanced multi-pass rendering were forced to grok premultiplied alpha. Now WebGL inside HTML has made it really easy to combine multiple sources, and that’s one reason why it’s more important to know about premultiplied blending than it used to be.

That’s my big reveal, my main point, the beef in this cheeseburger. Knowing how to use premultiplied blending gives you the ammo to deal with compositing WebGL canvases.

There are a bunch more well-known reasons you should use or at least know about premultiplied images. Making mip-maps and blurring textures should be done in premultiplied space, and you get a bag of extra cool tricks to use if you composite using premultiplied blending. The links at the end explain more.

So recap - what does this all mean? Here’s the cheat sheet:

- Know which images come from artists and pre-multiply them. It can be done as a build step, or converted on the fly, but don’t ask artists to use or think about premultiplied images. WebGL makes it easy to pre-multiply images during load: see gl.pixelStorei combined with gl.UNPACK_PREMULTIPLY_ALPHA_WEBGL.

- Know which images come from a previous render pass of some sort, and leave those alone, because they’re already premultiplied.

- Use gl.blendFunc(gl.ONE, gl.ONE_MINUS_SRC_ALPHA); as your default choice for textures, and not that other blend func, especially don’t use the popular one that that doesn’t handle destination alpha at all.

- Pay attention to your shaders and make sure they’re consuming and producing premultiplied colors as needed.

Now go stack some WebGL canvases on top of each other and over your page, and start rendering transparency with confidence.

That’s it, that’s my case. I hope I’ve added some clarity, or at least piqued your interest. If you’re working with WebGL, premultiplied alpha is worth taking some extra time to understand completely, it will pay off in spades.

Further Reading

- http://webglfundamentals.org/webgl/lessons/webgl-and-alpha.html (Great info, a fantastic resource, but for some reason gently guides the reader away from premultplied alpha.)

- http://www.realtimerendering.com/blog/gpus-prefer-premultiplication/ (Excellent and detailed explanation of the fringing and filtering problems with straight-alpha images.)

- http://tomforsyth1000.github.io/blog.wiki.html#[[Premultiplied%20alpha%20part%202]] (Explains it all, but took me several readings to get my head around it.)

- https://en.wikipedia.org/wiki/Alpha_compositing (Like so many WP articles, very dense, super-detailed, super-correct, but all math and no intuition.)

- http://keithp.com/~keithp/porterduff/p253-porter.pdf (Before there was OpenGL, before there was Iris GL, before there were even computers, there was The Original Source.)